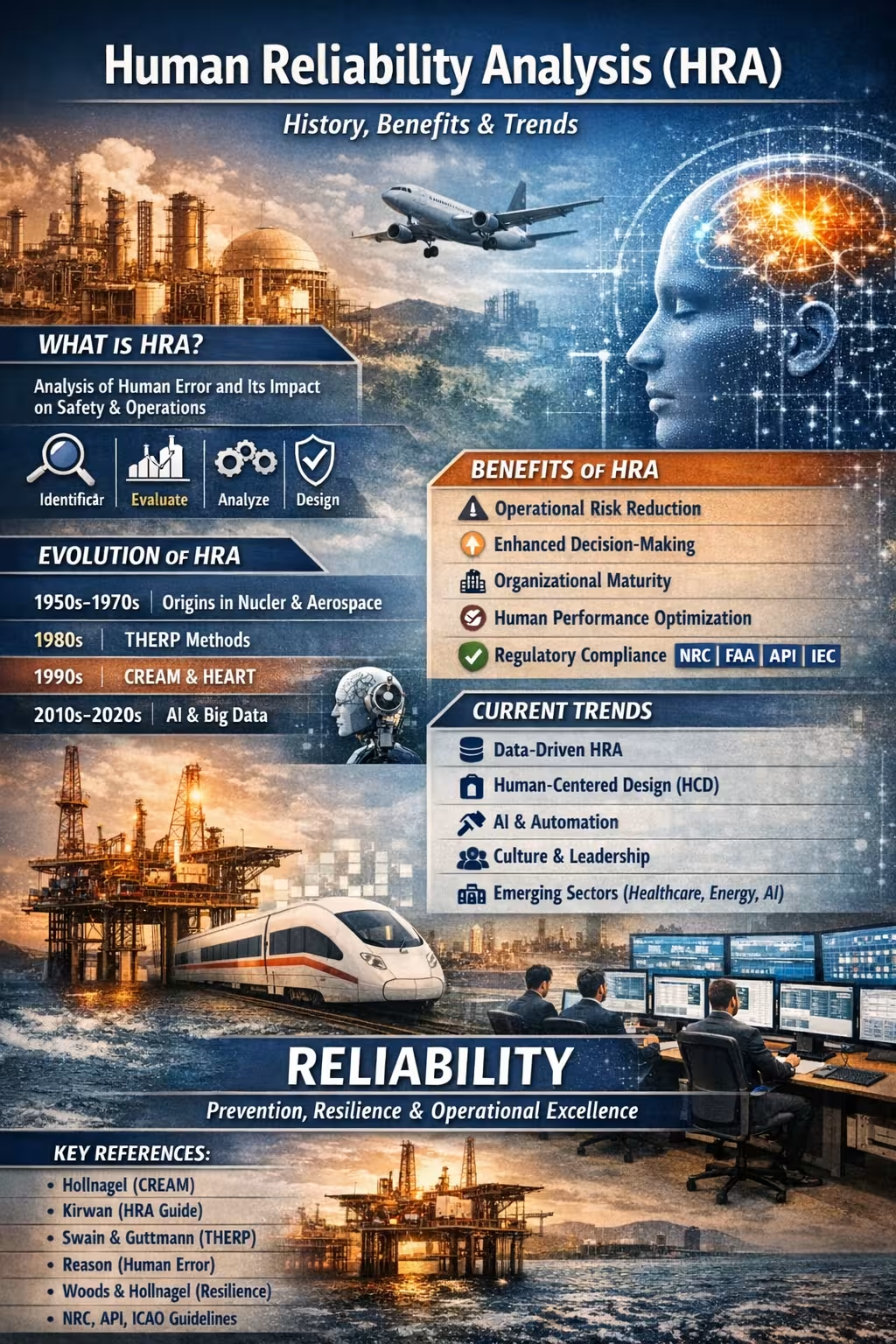

Human Reliability Analysis (HRA): History, Benefits, and Trends of a Discipline Essential to Operational Excellence

Abstract

Human Reliability Analysis (HRA) is a discipline that integrates engineering, cognitive psychology, human factors, and risk management to understand, predict, and reduce the likelihood of human error in complex systems. Its evolution has paralleled the development of critical industries—nuclear, aerospace, oil and gas, healthcare, and transportation—and today it stands as a strategic pillar for organizations seeking safety, operational continuity, and organizational maturity.

1. What Is Human Reliability Analysis (HRA)?

HRA is a set of qualitative and quantitative methods designed to:

- Identify critical tasks where human error may lead to significant consequences.

- Evaluate the probability of human failure (Human Error Probability, HEP).

- Analyze the factors influencing human performance (Performance Shaping Factors, PSF).

- Design barriers, defenses, and controls to reduce the likelihood and impact of error.

At its core, HRA recognizes that human error is not an individual flaw but a systemic phenomenon, shaped by organizational, technological, cognitive, and environmental conditions.

2. Organizational Benefits of HRA

2.1. Operational Risk Reduction

HRA anticipates human failures before they occur, strengthening safety in critical operations.

2.2. Enhanced Decision-Making

It provides quantitative and qualitative data to prioritize investments, redesign processes, and optimize resources.

2.3. Organizational Maturity

By integrating culture, leadership, engineering, and change management, HRA fosters robust and resilient systems.

2.4. Human Performance Optimization

It supports the design of tasks, interfaces, procedures, and work environments that enable reliable performance.

2.5. Regulatory Compliance

HRA is required or recommended by agencies such as:

- NRC (Nuclear Regulatory Commission)

- FAA (Federal Aviation Administration)

- API (American Petroleum Institute)

- IEC (International Electrotechnical Commission)

3. Historical Evolution of HRA

The development of HRA can be divided into four major stages:

3.1. 1950–1970: Origins

- Emerged in nuclear and aerospace contexts.

- Recognized that advanced technological systems remain vulnerable to human error.

- Early models focused on error probability with mechanistic approaches.

3.2. 1980s: First Generation (HRA-I)

- Pioneering methods such as THERP (Technique for Human Error Rate Prediction).

- Quantitative focus based on task decomposition.

- Limitation: minimal consideration of cognitive and organizational factors.

3.3. 1990s–2000s: Second Generation (HRA-II)

- Introduction of cognitive and contextual models.

- Methods like CREAM, ATHEANA, HEART.

- Recognition that error stems from systemic conditions, not solely operator actions.

3.4. 2010–2026: Third Generation and the Digital Era

- Integration with data analytics, artificial intelligence, and real-time monitoring.

- HRA merges with:

- Organizational culture management

- Advanced human factors

- Cognitive ergonomics

- Cyber-physical systems

- Focus on resilience, safe automation, and human-centered design.

4. Current Trends in HRA

4.1. Data-Driven HRA

Use of big data, machine learning, and predictive analytics to estimate error probabilities in real time.

4.2. Integration with Management Systems

HRA is embedded in:

- Safety Management Systems (SMS)

- Asset Management Systems (ISO 55000)

- Reliability and Maintenance Systems (RCM, RBI)

4.3. Human-Centered Design (HCD)

Interfaces, procedures, and systems are designed to reduce cognitive load and enhance reliability.

4.4. Culture and Leadership as Critical Variables

Human error is understood as a symptom of organizational conditions, not a root cause.

4.5. HRA for Automation and AI

Evaluation of:

- Human–machine interaction

- Automation dependency

- Emerging risks from autonomous systems

4.6. HRA in Emerging Sectors

- Digital healthcare

- Renewable energy

- Autonomous mobility

- Industrial cybersecurity

5. Conclusion

Human Reliability Analysis has evolved from simple probabilistic models into a strategic discipline that integrates engineering, psychology, culture, and technology. In an increasingly complex world, HRA not only prevents errors—it builds safer, more resilient, and future-ready organizations.

6. Recommended References

- Hollnagel, E. (1998). Cognitive Reliability and Error Analysis Method (CREAM). Elsevier.

- Kirwan, B. (1994). A Guide to Practical Human Reliability Assessment. Taylor & Francis.

- Swain, A. D., & Guttmann, H. E. (1983). Handbook of Human Reliability Analysis with Emphasis on Nuclear Power Plant Applications (THERP). U.S. NRC.

- Reason, J. (1990). Human Error. Cambridge University Press.

- Embrey, D. (1986). HEART: Human Error Assessment and Reduction Technique.

- Woods, D. D., & Hollnagel, E. (2006). Resilience Engineering: Concepts and Precepts. Ashgate.

- National Research Council (NRC). (2000–2020). Human Reliability Analysis Reports.

- API RP 1173. Pipeline Safety Management Systems.

- ICAO. Human Factors Guidelines for Safety Audits.

Comments